Here are a few important ways to speed up page loading times, together with the improved recorded times for comparison on a typical WordPress web site. While WordPress is hardly an optimized web application, it does benefit from the same speedup methods as most web applications.

I used Google Chrome Developer Tools to time network transfers and page load times. There are various web-based tools available as well:

- https://developers.google.com/speed/pagespeed

- https://tools.pingdom.com

- https://www.webpagetest.org

Initial speed — 1.412 sec (TTFB 0.12 sec)

This was the speed on a fresh install of a WordPress web site on a small VPS running Nginx and PHP-FPM.

Enabling GZip compression — 1.326 sec (TTFB 0.13 sec)

Using compression on network transfers can greatly reduce file sizes, especially for text-based files such as HTML, CSS and JavaScript. The CPU overhead on modern servers is negligible, and can be cached if required.

PHP Opcode cache — 1.299 sec (TTFB 0.124 sec)

PHP scripts are typically compiled to bytecode on demand. By caching this complication with OPcache or APC, page load times and server load can be significantly reduced. APC did include a fast key/value cache, which has now been replaced by APCu.

WordPress Cache — 0.733 sec (TTFB 0.122 sec)

There are many WordPress cache plugins available, which reduce the amount of PHP code that has to be run on every request. Some caches can generate flat files, which are significantly faster, and can be used with Nginx.

Nginx FastCGI Cache — 0.731 sec (TTFB 0.119 sec)

Nginx is able to use a fast memory/disk cache to cache requests to PHP-FPM, further reducing page load times and server loads. This can be very beneficial on web sites with high load.

There are many other ways to speed up page load times, including dependency concatenation and minification and image optimization. It is also important to optimize client-side JavaScript to allow the user’s web browser to display content quickly.

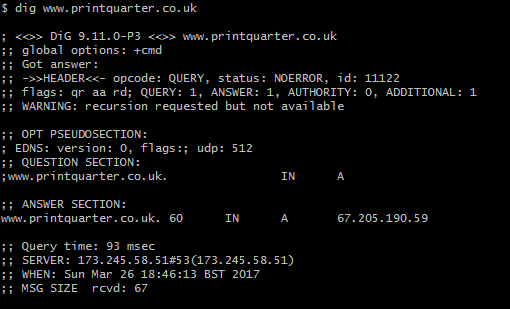

AnyCast DNS

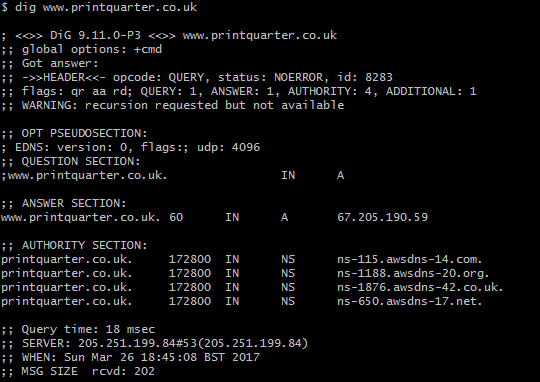

An initial visit to a web site requires a DNS lookup. Traditionally DNS has no way to send requests to the geographically closest server, but this is possible with AnyCast DNS. This feature is available on many providers including Amazon’s Route 53, Google’s Cloud Platform and Microsoft Azure. It functions by allowing multiple servers distributed throughout the world to have the same IP address.

By using AnyCast DNS, I was able to reduce an initial DNS request from 93 milliseconds to 18 milliseconds. Combined with having an optimized web server geographically close, even an initial visit to a web page can be displayed instantaneously.

Conclusion

Subtracting the round trip time to the server of 0.116 seconds, these optimizations reduced the effective Time To First Byte to 3 milliseconds. On a busy server, these optimizations will make a significant difference to the capacity of the server.