A video I took last year, using a 360 camera mounted on a backpack, on a walk down the San Antonio Riverwalk in Texas:

All posts by Jonathan Hassall

BioCity’s new Discovery building was unveiled a few days ago, with a unique solar installation, titled Corona, designed by Nottingham artist Wolfgang Buttress in partnership with Nottingham Trent University physicist Dr Martin Bencsik. Fibre-optic lights and aluminum tubes use real-time solar data from NASA, creating a light display which is always unique.

My 360 video with spatial audio (uses a different mix of surround sound microphones as you spin your virtual head to simulate reality):

360 still images with audio:

I had a try adding spatial audio to a VR video. In theory this should add realism to a 360 VR video by adding audio that can be processed to play back differently depending on the direction of the viewer.

I updated the Zoom H2n to firmware version 2.00 as described here https://www.zoom.co.jp/H2n-update-v2, and set it to record to uncompressed WAV at 48KHz, 16-bit.

I attached the audio recorder to my Ricoh Theta S camera. I orientated the camera so that the record button was facing toward me, and the Zoom H2n’s LCD display was facing away from me. I pressed record on the sound recorder and then the video camera. I then needed a sound and visual indicator to be able to synchronize the two together in post production, and clicking my fingers worked perfectly.

I installed the http://www.matthiaskronlachner.com/?p=2015. I created a new project in Adobe Premiere, and a new sequence with Audio Master set to Multichannel, and 4 adaptive channels. Next I imported the audio and video tracks, and cut them to synchronize to when I clicked my fingers together.

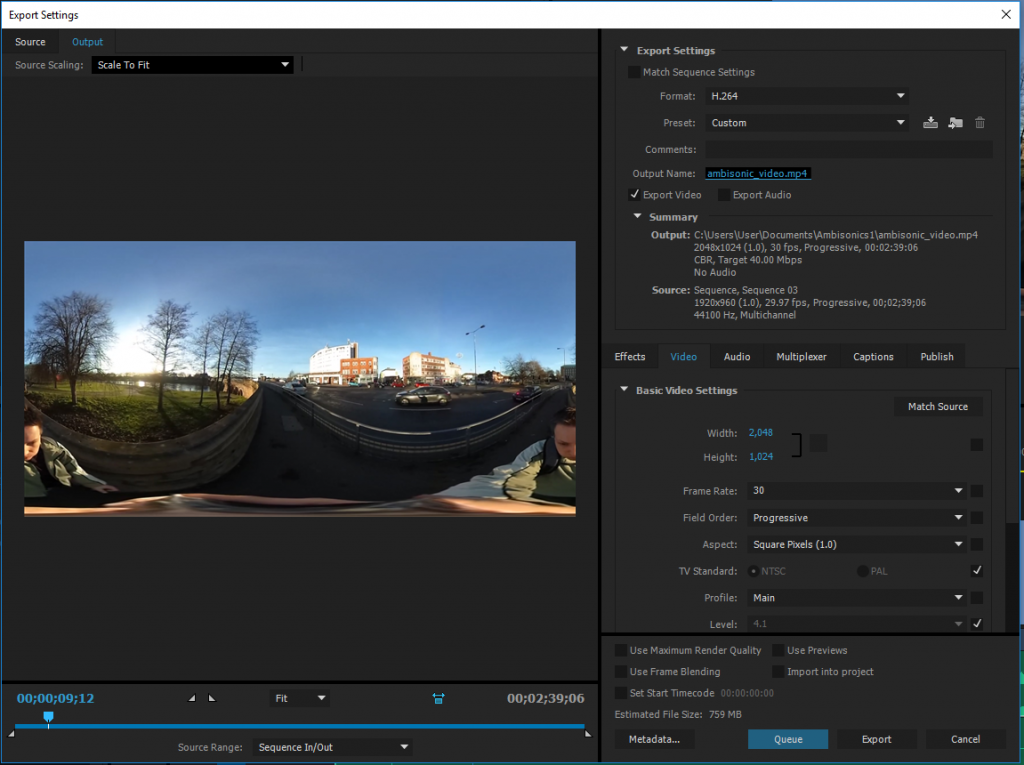

Exporting was slightly more involved. I exported two files, one for video and one for audio.

For the video export, I used the following settings:

- Format: H264

- Width: 2048 Height: 1024

- Frame Rate: 30

- Field Order: Progressive

- Aspect; Square Pixels (1.0)

- Profile: Main

- Bitrate: CBR 40Mbps

- Audio track disabled

For the audio export, I used the following settings:

- Format: Waveform Audio

- Audio codec: Uncompressed

- Sample rate: 48000 Hz

- Channels: 4 channel

- Sample Size: 16 bit

I then used FFmpeg to combine the two files with the following command:

ffmpeg -i ambisonic_video.mp4 -i ambisonic_audio.wav -channel_layout 4.0 -c:v copy -c:a copy final_video.mov

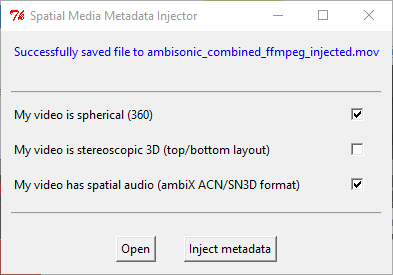

And finally injected 360 metadata using the 360 Video Metadata app, making sure to tick both ‘My video is spherical (360)’ and ‘My video has spatial audio (ambiX ACN/SN3D format).

And finally uploaded it to YouTube. It took an extra five hours of waiting for the spatial audio track to be processed by YouTube. Both the web player and native Android and iOS apps appear to support spatial audio.

If you have your sound recorder orientated incorrectly, you can correct it using the plugins. In my case, I used the Z‑axis rotation to effectively turn the recorder around.

There are a lot of fascinating optimizations and explanations of ambisonic and spatial audio processing available to read at Wikipedia:

The original in-camera audio (Ricoh Theta S records in mono) to compare can be viewed here:

My Sony smartphone has an unusual TRRRS (Tip-Ring-Ring-Ring-Seal) connector, allowing it to use very reasonably priced noise cancelling headphones (Sony MDR-NC31EM) that have an extra microphone in each earphone.

I found that the Sony app Sound Recorder allows selecting recording directly from these two microphones, and are great for binaural recording, and I gave it a go walking along a few busy streets. You can listen on YouTube and Soundcloud:

Awesome to finally get to use Webpack and Babel to transpile some ES6 code to vanilla JavaScript that even Internet Explorer can use:

ES6:

1 2 3 4 5 6 7 8 9 10 11 | export function arrowTest() { var materials = [ ‘Hydrogen’, ‘Helium’, ‘Lithium’, ‘Beryllium’ ]; // expected output: Array [8, 6, 7, 9] return materials.map(material => material.length); } |

Transpiled:

1 2 3 4 5 6 7 | function arrowTest() { var materials = [‘Hydrogen’, ‘Helium’, ‘Lithium’, ‘Beryllium’]; // expected output: Array [8, 6, 7, 9] return materials.map(function (material) { return material.length; }); } |

Useful links:

Here is the first version of a simple droplet for converting and publishing 360 panoramic videos. It is intended to be used for the processed output file from a Ricoh Theta S that has the standard 1920x960 resolution. It is easy to do manually, but many people asked for an automatic droplet.

It conveniently includes 32-bit and 64-bit versions of FFMPEG for performing video conversion.

Instructions:

- Extract to your KRPano folder.

- Drag your MP4 video file to the ‘MAKE PANO (VIDEO FAST) droplet’.

- Be patient while your video is encoded to various formats.

- Rename the finished ‘video_x’ folder to a name of your choice.

You can download the droplet here:

Recent improvements include:

- Adding three variations of quality, which can be accessed by the viewer in Settings.

- Improving the quality of the default playback setting.

- Automatically switching to the lowest quality when used on a mobile device.

- Using a single .webm video, as the format is very rarely used, and very time consuming to encode.

- Outputs to a named folder.

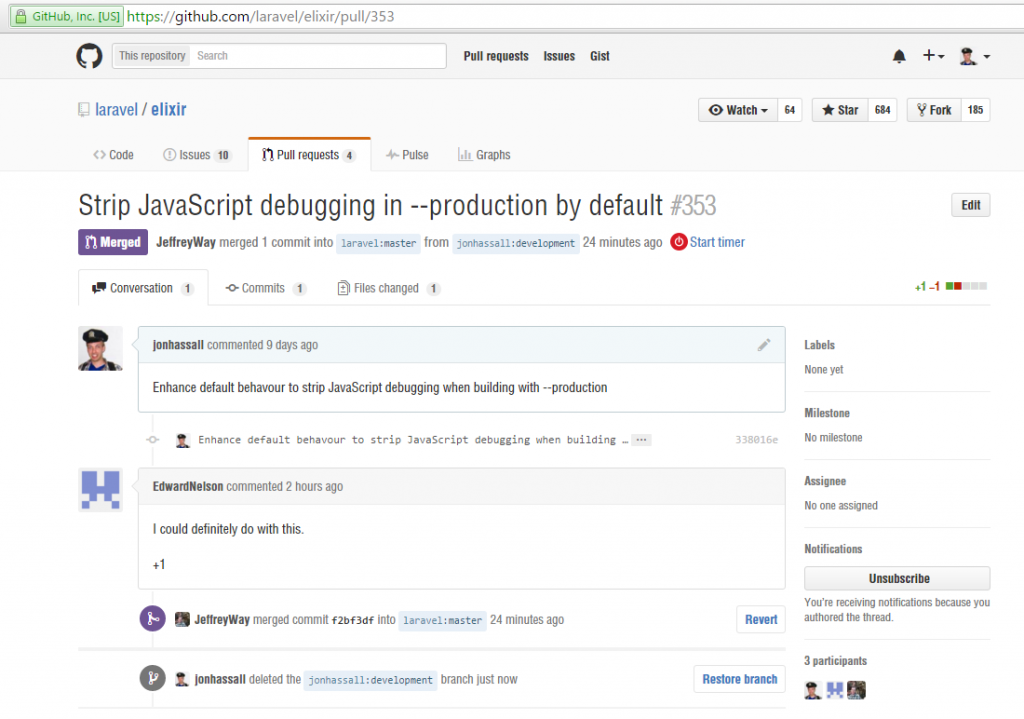

While using Gulp with Laravel’s Elixir, I found while it minifies/uglifies JavaScript on a production build, it doesn’t strip JavaScript debugging. It was also far more time consuming to implement this as a custom Task or Extension.

Stripping debugging allows you to freely use Console.debug() and similar debugging calls in development, which otherwise will reduce the performance of your JavaScript application, and in some cases make them completely unusable to certain browsers.

So I did it myself, and made a Pull request (Github) with the official Laravel Elixir repository, which was approved. Nice to give back.